Compliance

Automatic compliance mapping of AI red teaming findings to OWASP, MITRE ATLAS, NIST AI RMF, and Google SAIF frameworks.

Dreadnode automatically maps every AI red teaming finding to industry security and AI safety frameworks. This helps governance and compliance teams understand how the AI system under test aligns with regulatory requirements and industry standards, and identify gaps in testing coverage that need to be addressed.

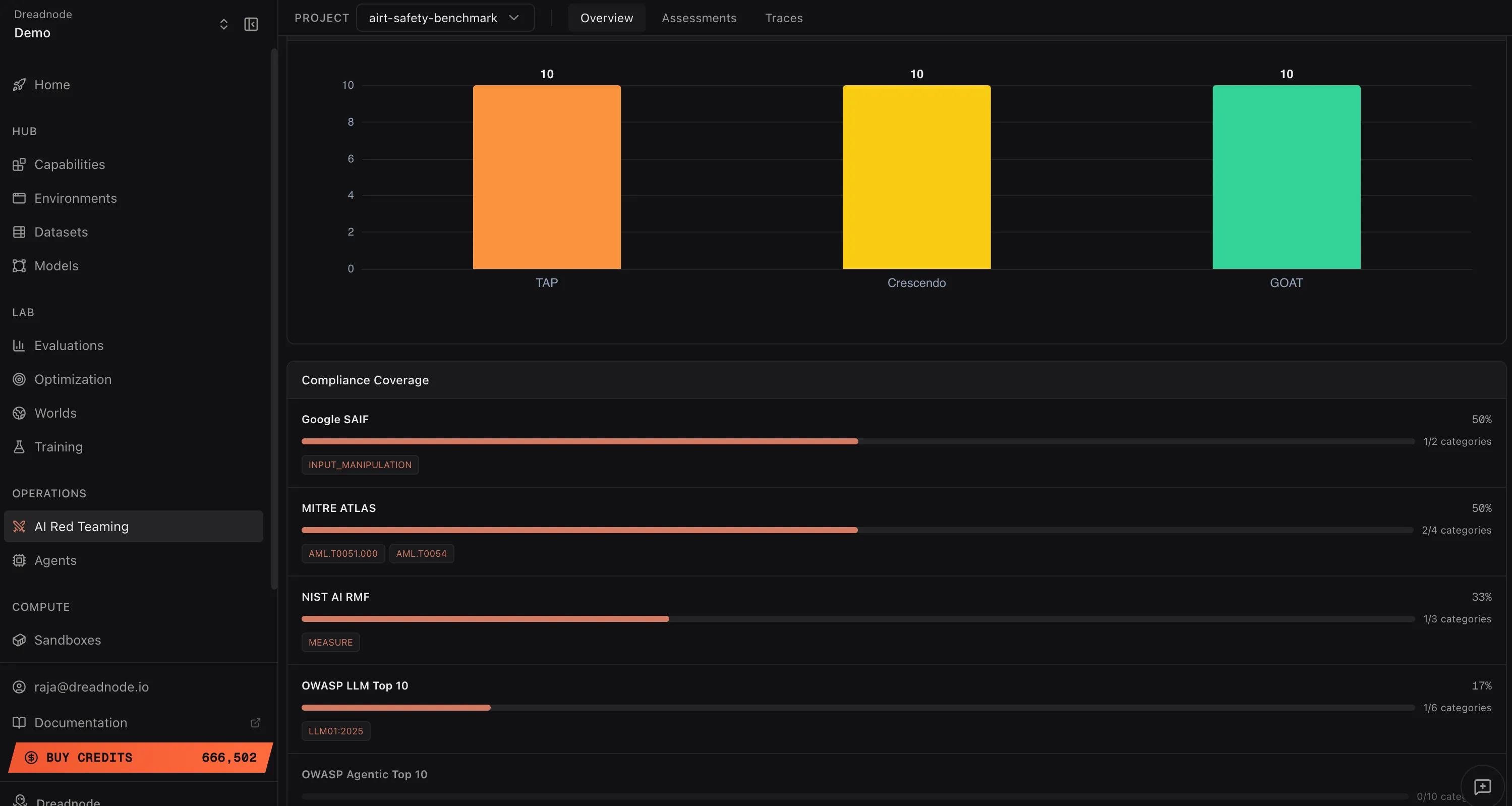

Compliance Coverage

Section titled “Compliance Coverage”

The Compliance Coverage section shows a progress bar for each framework indicating what percentage of that framework’s categories were tested in your red teaming operation. Next to each bar, the specific categories that were matched are displayed as tags.

Low coverage percentages indicate areas where additional red teaming is needed. For example, if OWASP LLM Top 10 shows 17% coverage (1/6 categories), you should expand your attack goals to cover the remaining categories before making a deployment decision.

Supported frameworks

Section titled “Supported frameworks”Google SAIF (Secure AI Framework)

Section titled “Google SAIF (Secure AI Framework)”Google’s framework for securing AI systems. Categories include:

- INPUT_MANIPULATION - adversarial inputs that manipulate model behavior

- OUTPUT_MANIPULATION - attacks that control or corrupt model outputs

- MODEL_THEFT - attempts to extract or replicate model weights

- DATA_POISONING - attacks on training data integrity

- SUPPLY_CHAIN_COMPROMISE - attacks on the AI development pipeline

- PRIVACY_LEAKAGE - extraction of private or sensitive information

- AVAILABILITY_ATTACKS - denial of service against AI systems

MITRE ATLAS (Adversarial Threat Landscape for AI Systems)

Section titled “MITRE ATLAS (Adversarial Threat Landscape for AI Systems)”The adversarial ML threat matrix maintained by MITRE. Key techniques include:

- AML.T0051.000 - LLM Prompt Injection: Direct

- AML.T0051.001 - LLM Prompt Injection: Indirect

- AML.T0054 - LLM Jailbreak

- AML.T0043 - Adversarial Input Crafting

- AML.T0024 - Exfiltration via ML Inference API

- AML.T0049 - Exploit Public-Facing Application

- AML.T0048 - Data Exfiltration

NIST AI RMF (AI Risk Management Framework)

Section titled “NIST AI RMF (AI Risk Management Framework)”The US National Institute of Standards and Technology framework for managing AI risk:

- GOVERN - governance structures and accountability for AI risk

- MAP - identify and categorize AI risks in context

- MEASURE - assess and quantify identified AI risks

- MANAGE - prioritize and act on AI risks

OWASP LLM Top 10

Section titled “OWASP LLM Top 10”The Open Worldwide Application Security Project’s top 10 risks for LLM applications:

- LLM01:2025 - Prompt Injection

- LLM02:2025 - Sensitive Information Disclosure

- LLM03:2025 - Supply Chain Vulnerabilities

- LLM04:2025 - Data and Model Poisoning

- LLM05:2025 - Improper Output Handling

- LLM06:2025 - Excessive Agency

- LLM07:2025 - System Prompt Leakage

- LLM08:2025 - Vector and Embedding Weaknesses

- LLM09:2025 - Misinformation

- LLM10:2025 - Unbounded Consumption

OWASP Agentic Top 10

Section titled “OWASP Agentic Top 10”Security risks specific to agentic AI systems:

- Agent Behavior Hijacking (ASI01)

- Tool Misuse (ASI02)

- Identity and Privilege Abuse (ASI03)

- Insecure Data Handling (ASI04)

- Insecure Output Handling (ASI05)

- Memory Poisoning (ASI06)

- Insecure Inter-Agent Communication (ASI07)

- Cascading Failures (ASI08)

- Human-Agent Trust Issues (ASI09)

- Rogue Agents / Uncontrolled Scaling (ASI10)

How compliance tags are assigned

Section titled “How compliance tags are assigned”Compliance tags are assigned automatically based on the attack type, goal category, and finding characteristics. No manual tagging is required. Each attack factory in the SDK carries a predefined set of compliance mappings that are applied to every finding it produces.

For example, a Tree of Attacks with Pruning (TAP) attack targeting “system prompt disclosure” automatically tags findings with:

- OWASP LLM07:2025 (System Prompt Leakage)

- MITRE ATLAS AML.T0051.000 (Prompt Injection: Direct)

- Google SAIF INPUT_MANIPULATION

- NIST AI RMF MEASURE

Using compliance data for decisions

Section titled “Using compliance data for decisions”- Go/no-go deployment decisions - if critical frameworks show low coverage or high success rates, the model is not ready for production

- Regulatory reporting - export compliance data as evidence of adversarial testing for EU AI Act, NIST AI RMF, or industry-specific requirements

- Gap analysis - identify which framework categories have not been tested and plan additional red teaming campaigns to close the gaps

- Trend tracking - compare compliance posture across model versions to verify that safety improvements are holding

Next steps

Section titled “Next steps”- Analytics & Reporting - deep analytics charts

- Overview Dashboard - risk metrics and findings

- Export - download reports and data